As I seem to be saying a lot this month, I’ve been using the Internet for a long time. I remember when Gopher was more useful than HTTP. I remember when with a little dedication you could surf a significant portion of the entire web. I mean, I’m not Berners-Lee or anything, but I started on the web about three years after he did

That, it should go without saying, was a long time ago.

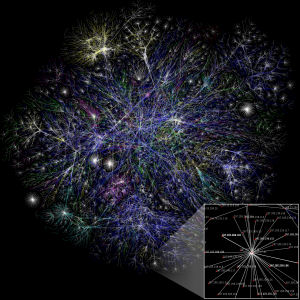

This week Google announced that their index contains a trillion pages. A trillion.

Let me put that in context for you: if you spent one second on each page, looking at a trillion web pages would take you over 30,000 years. That’s no sleep, and no bathroom breaks. No human is going to ever see even a small portion of the current web. Forget about the tremendous masses of information that are added every day. Sure, there’s probably a million pages of photos of bacon taped to a cat, etc, but still, a trillion pages.

Oh, and in case you’re somehow blasé about that trillion pages number… don’t forget that what Google indexes is the surface web, and everyone says the Deep Web is several orders of magnitude bigger than the surface. Several orders of magnitude bigger than a trillion pages. Chew on that for a minute.

2 comments for “The Web is… um… big.”